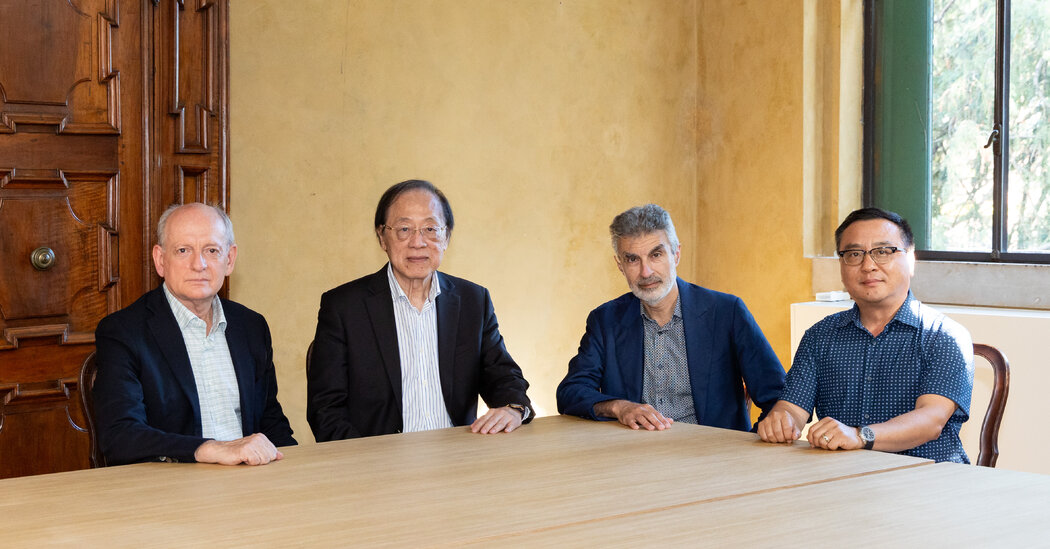

Scientists from the United States, China and other nations called for an international authority to oversee artificial intelligence.

Scientists who helped pioneer artificial intelligence are warning that countries must create a global system of oversight to check the potentially grave risks posed by the fast-developing technology.

The release of ChatGPT and a string of similar services that can create text and images on command have shown how A.I. is advancing in powerful ways. The race to commercialize the technology has quickly brought it from the fringes of science to smartphones, cars and classrooms, and governments from Washington to Beijing have been forced to figure out how to regulate and harness it.

In a statement on Monday, a group of influential A.I. scientists raised concerns that the technology they helped build could cause serious harm. They warned that A.I. technology could, within a matter of years, overtake the capabilities of its makers and that “loss of human control or malicious use of these A.I. systems could lead to catastrophic outcomes for all of humanity.”

If A.I. systems anywhere in the world were to develop these abilities today, there is no plan for how to rein them in, said Gillian Hadfield, a legal scholar and professor of computer science and government at Johns Hopkins University.

“If we had some sort of catastrophe six months from now, if we do detect there are models that are starting to autonomously self-improve, who are you going to call?” Dr. Hadfield said.

On Sept. 5-8, Dr. Hadfield joined scientists from around the world in Venice to talk about such a plan. It was the third meeting of the International Dialogues on A.I. Safety, organized by a nonprofit research group in the United States called Far.AI.